I was at the Taj last month for the 5th Annual Aikya Connect Family Office Forum. 85+ single family offices … More

Category: Technology

The Wealth Management Industry Is About to Get AI’d.

I have spent 30+ years in technology. I have seen multiple waves of disruption: the internet, mobile, cloud, SaaS. Each … More

The Tweet That Broke My Timeline (And It Wasn’t a Ferrari)

If you know me, you know I’d rather be writing about a $38.5 million Ferrari or the latest Concours d’Elegance. … More

Transforming Wealth Management with AI Tools

In the world of AI, everything is cool until it hits your area of expertise. I saw the recent announcement … More

Family Office Summit in Bengaluru

Recently, MProfit took part in the Transformance Forums Family Office Summit in Bengaluru. It was a gathering of around 100 … More

My Hospital-Wingman, ChatGPT

I just spent 7 days at Breach Candy Hospital. Not only did the experience shift my outlook on life, but … More

AI Code Editors: Are They Worth the Hype?

Driven by rapid innovation, significant investments, and an influx of funding, AI has taken center stage in today’s media landscape. … More

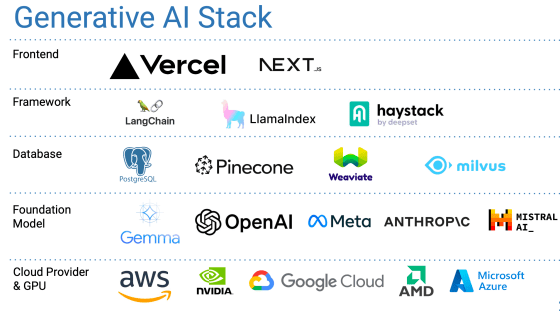

Generative AI Tech Stack

Around 12 years ago I created a slide deck called the Startup Engineering Cookbook which was created to help non-technical … More

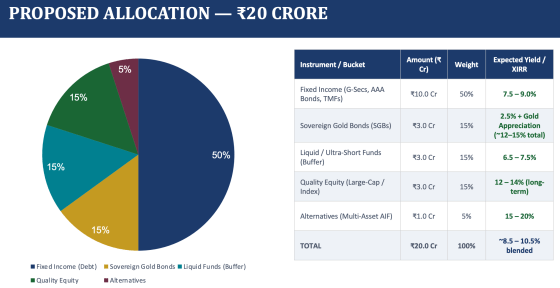

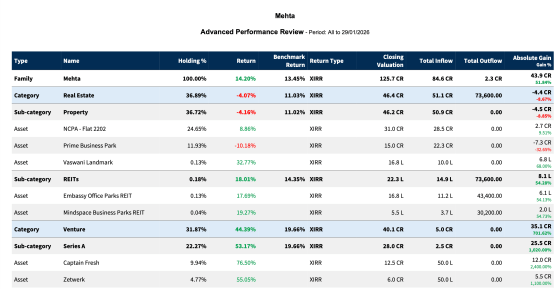

Family Office Financial Data: Your Money, Your Data

In my last two blog posts, The Future of WealthTech and What Is a Family Office, I explored two topics … More